Meta, the American tech giant, is being investigated by European Union regulators for the spread of disinformation on its platforms Facebook and Instagram, poor oversight of deceptive advertisements and potential failure to protect the integrity of elections.

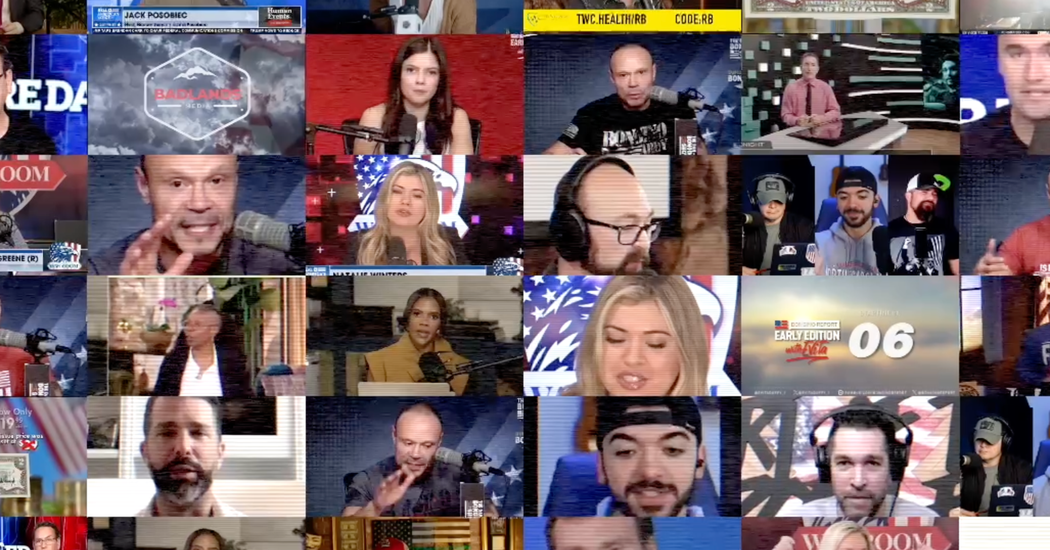

On Tuesday, European Union officials said Meta does not appear to have sufficient safeguards in place to combat misleading advertisements, deepfakes and other deceptive information that is being maliciously spread online to amplify political divisions and influence elections.

The announcement appears intended to pressure Meta to do more ahead of elections across all 27 E.U. countries this summer to elect new members of the European Parliament. The vote, taking place from June 6-9, is being closely watched for signs of foreign interference, particularly from Russia, which has sought to weaken European support for the war in Ukraine.

The Meta investigation shows how European regulators are taking a more aggressive approach to regulate online content than authorities in the United States, where free speech and other legal protections limit the role the government can play in policing online discourse. A new E.U. law, called the Digital Services Act, took effect last year and gives regulators broad authority to rein in Meta and other large online platforms over the content shared through their services.

“Big digital platforms must live up to their obligations to put enough resources into this, and today’s decision shows that we are serious about compliance,” Ursula von der Leyen, the president of the European Commission, the E.U.’s executive branch, said in a statement.

European officials said Meta must address weaknesses in its content moderation system to better identify malicious actors and take down concerning content. They noted a recent report by AI Forensics, a civil society group in Europe, that identified a Russian information network that was purchasing misleading ads through fake accounts and other methods.

European officials said Meta appeared to be diminishing the visibility of political content with potential harmful effects on the electoral process. Authorities said the company must provide more transparency about how such content spreads.

Meta defended its policies and said it acts aggressively to identify and block disinformation from spreading.

“We have a well established process for identifying and mitigating risks on our platforms,” the company said in a statement. “We look forward to continuing our cooperation with the European Commission and providing them with further details of this work.”

The Meta inquiry is the latest announced by E.U. regulators under the Digital Services Act. The content moderation practices of TikTok and X, formerly known as Twitter, are also being investigated.

The European Commission can fine companies up to 6 percent of global revenue under the digital law. Regulators can also raid a company’s offices, interview company officials and gather other evidence. The commission did not say when the investigation will end.

Social media platforms are under immense pressure this year as billions of people around the world vote in elections. The techniques used to spread false information and conspiracies have grown more sophisticated — including new artificial intelligence tools to produce text, videos and audio — but many companies have scaled back their election and content moderation teams.

European officials noted that Meta had reduced access to CrowdTangle, a service owned by Meta used by governments, civil society groups and journalists to monitor disinformation on its platforms.